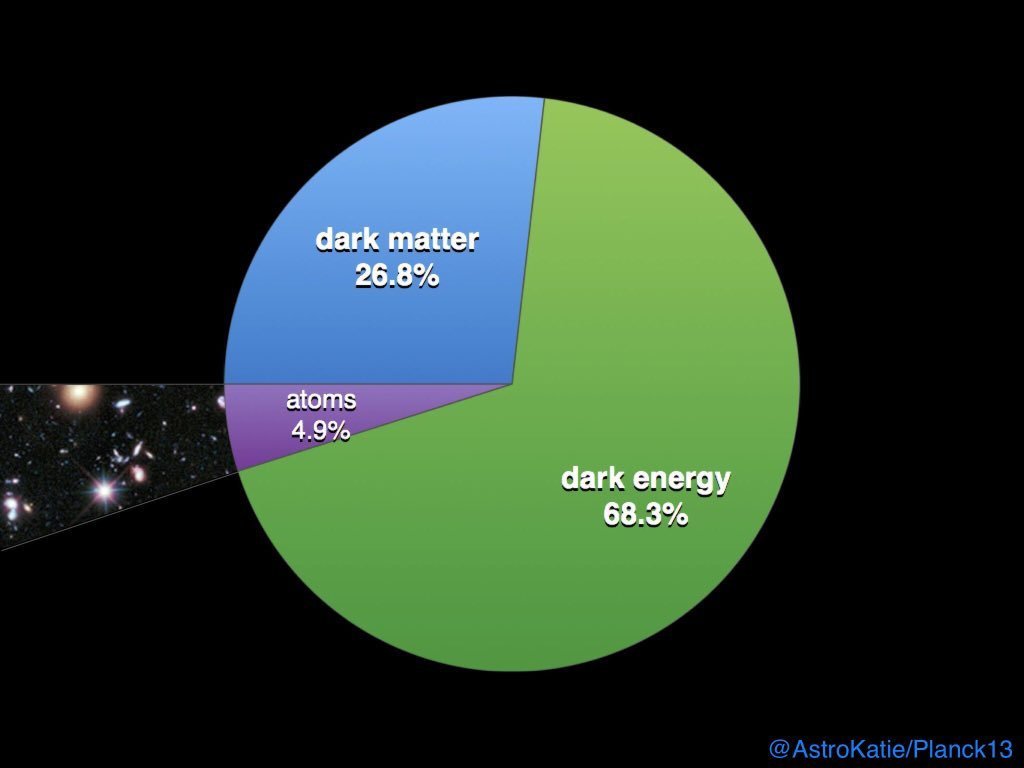

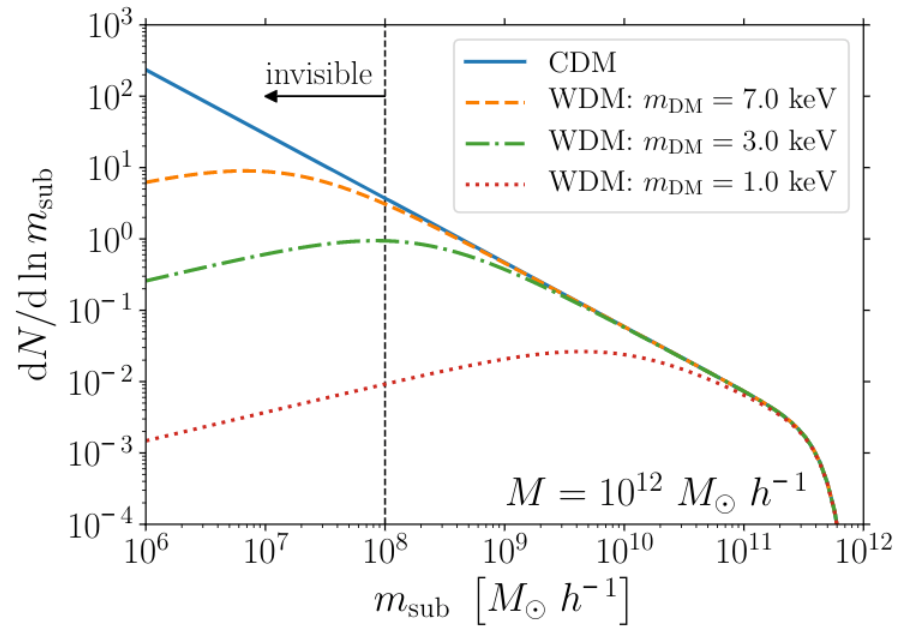

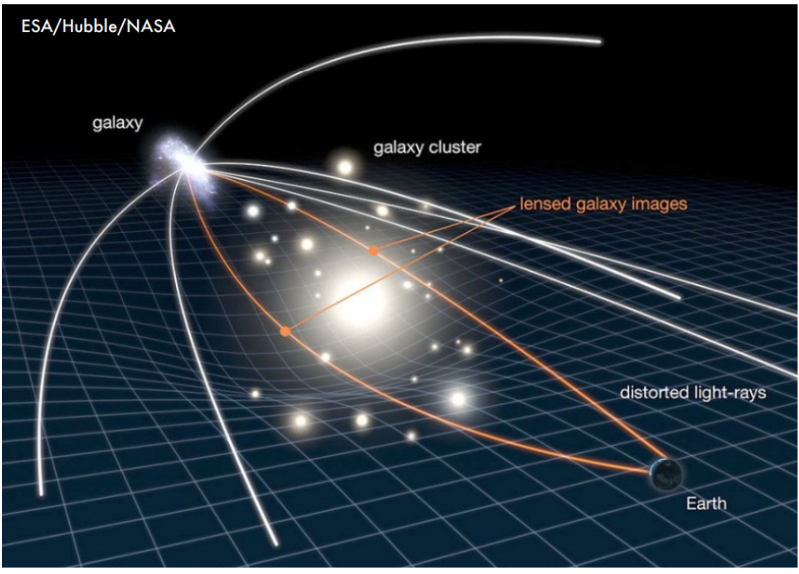

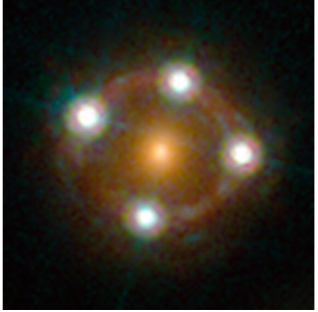

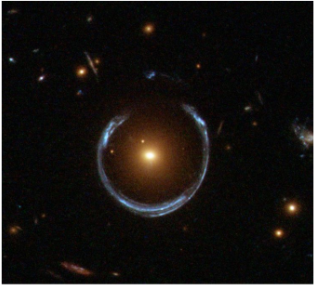

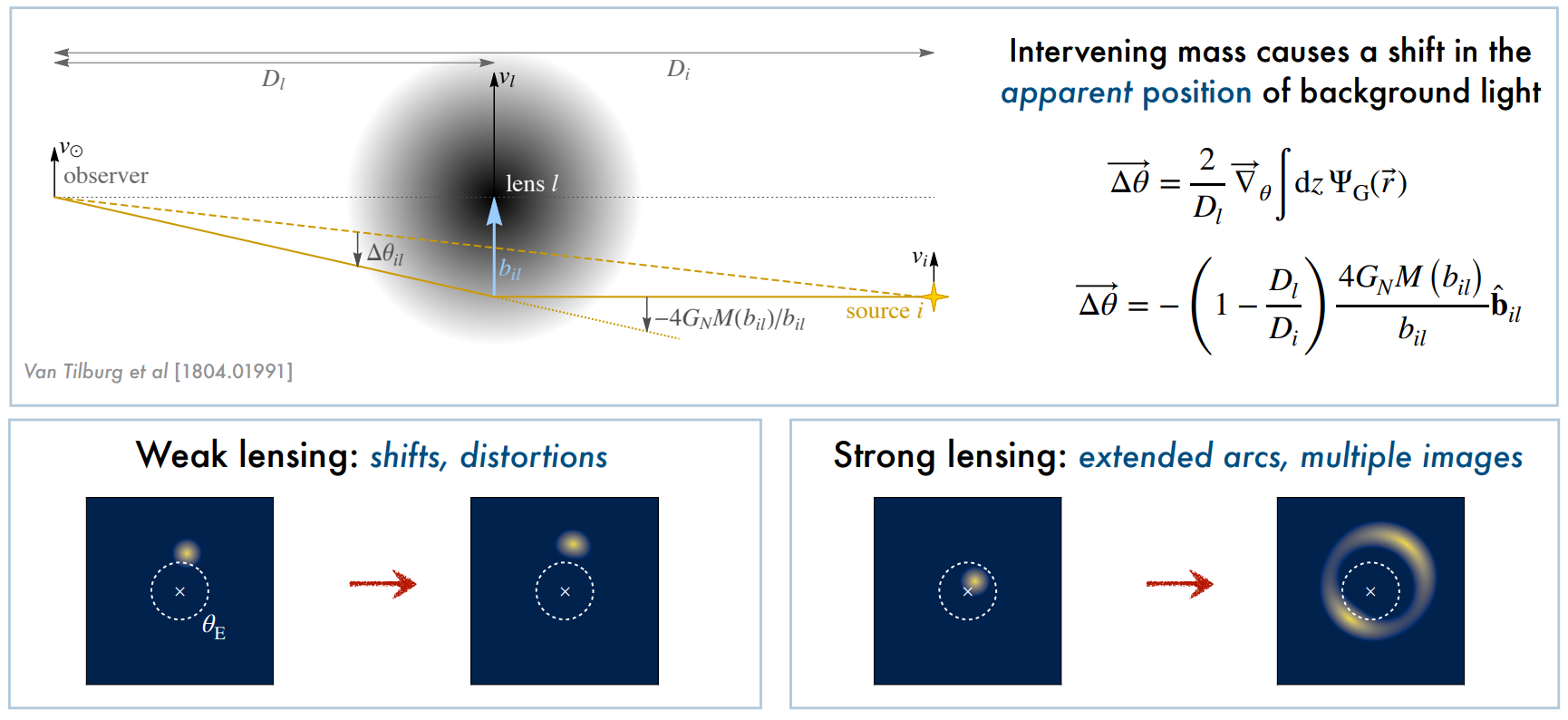

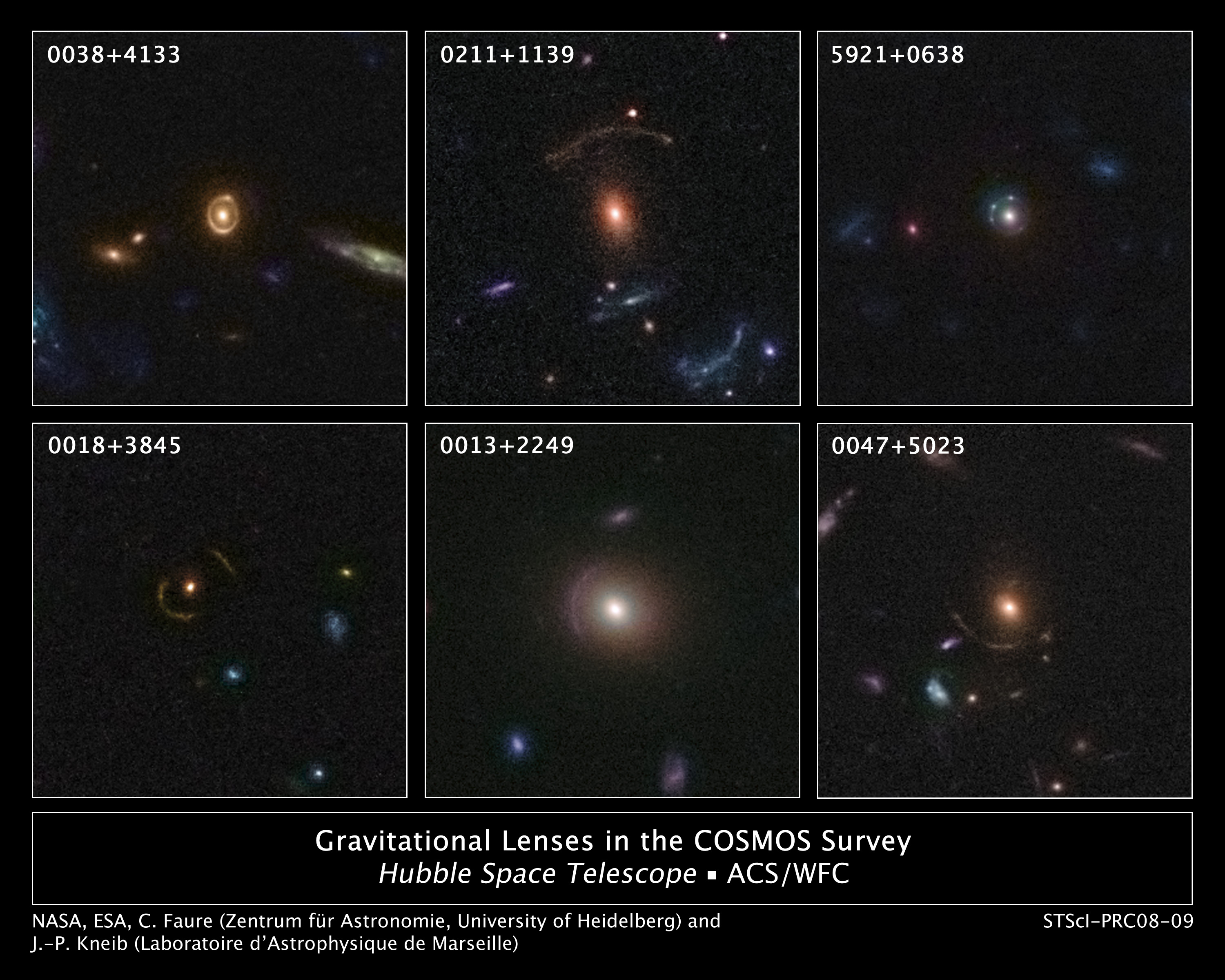

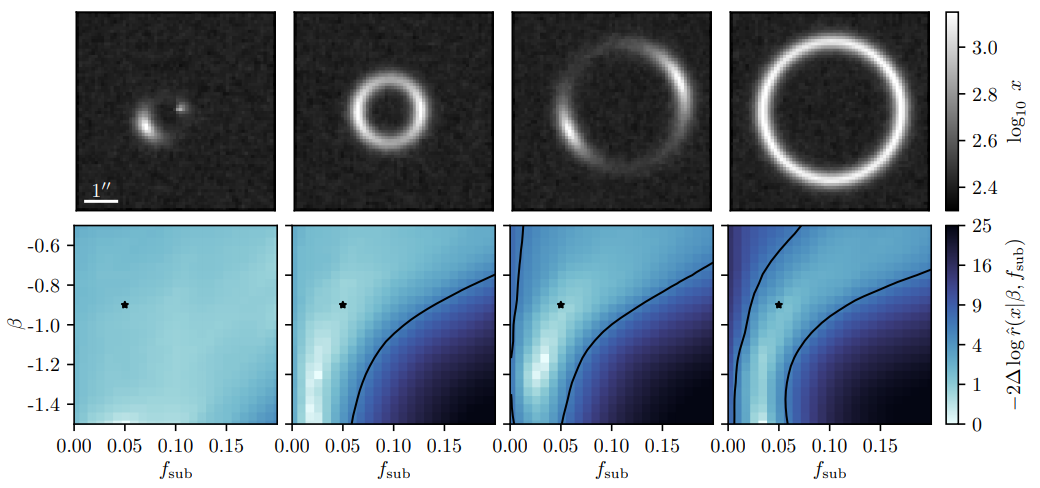

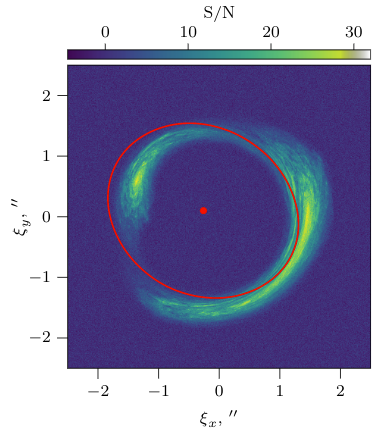

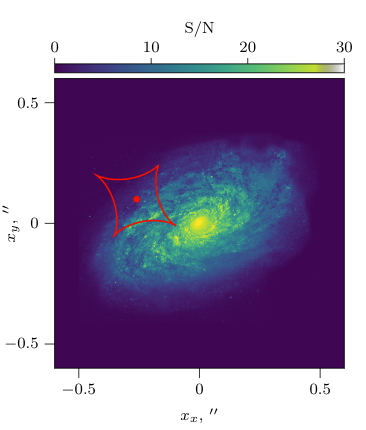

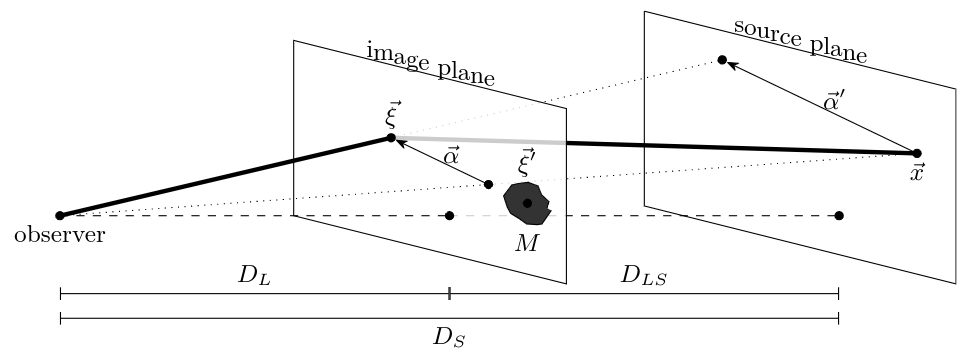

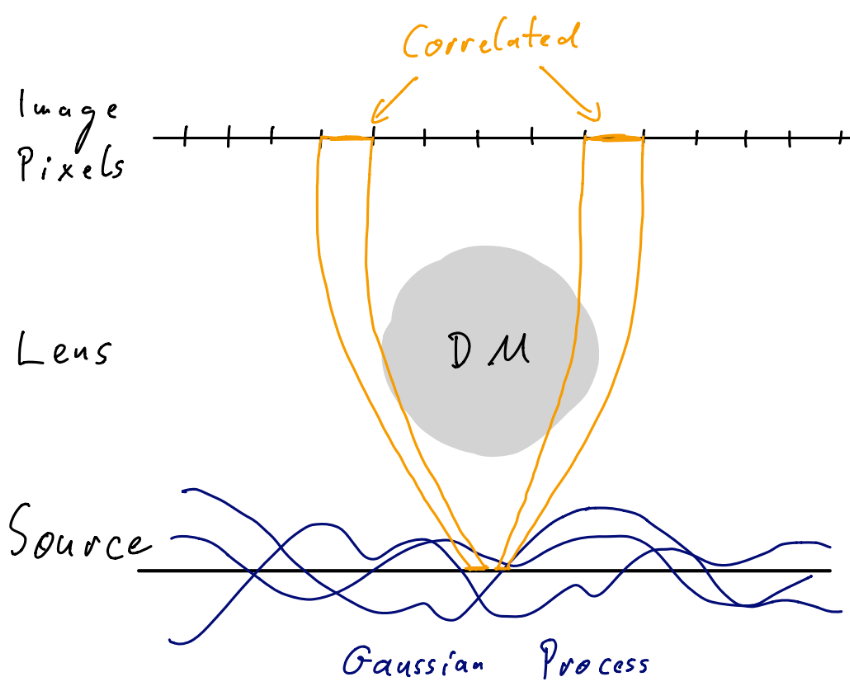

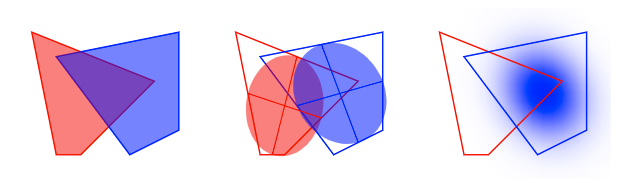

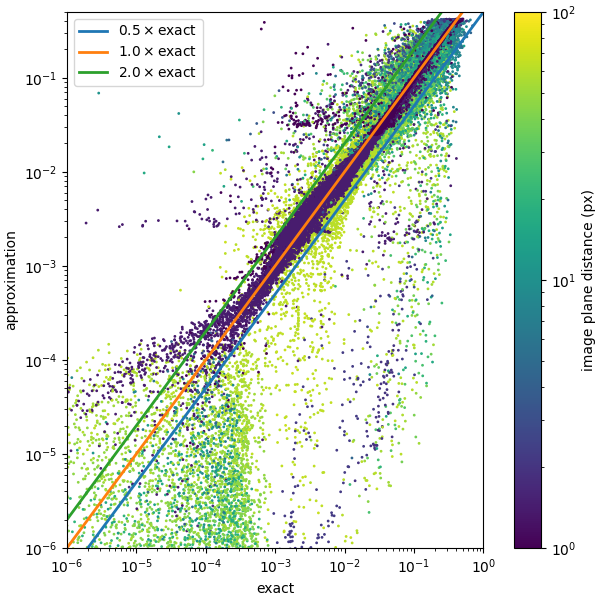

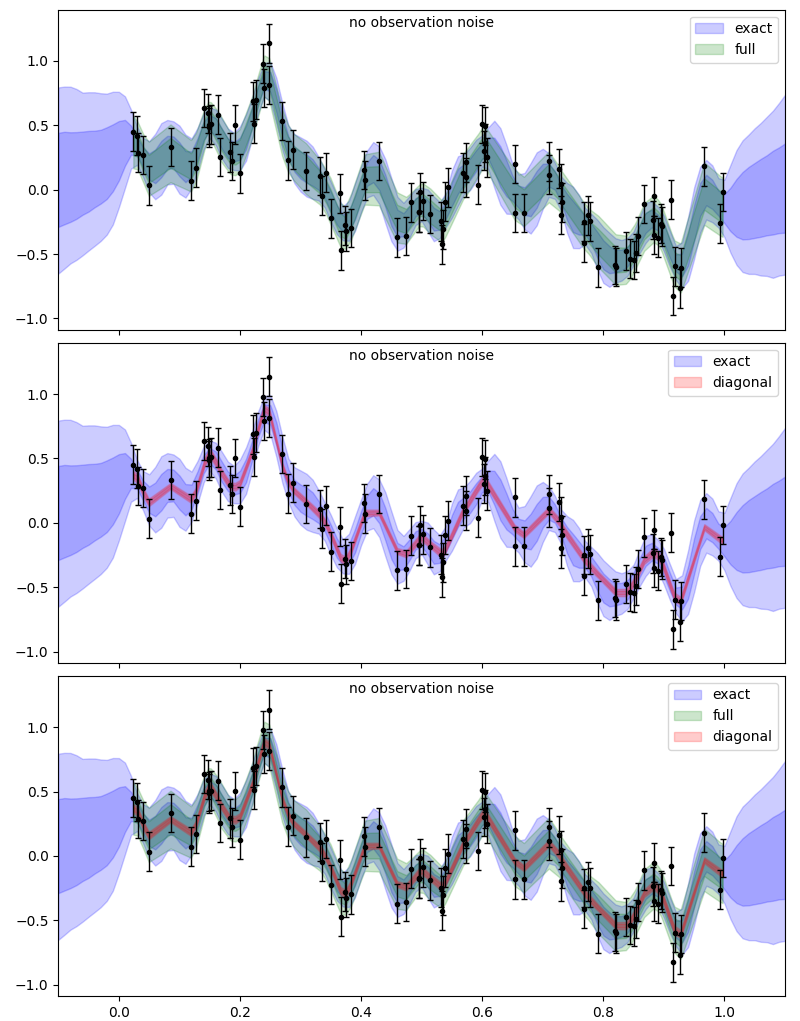

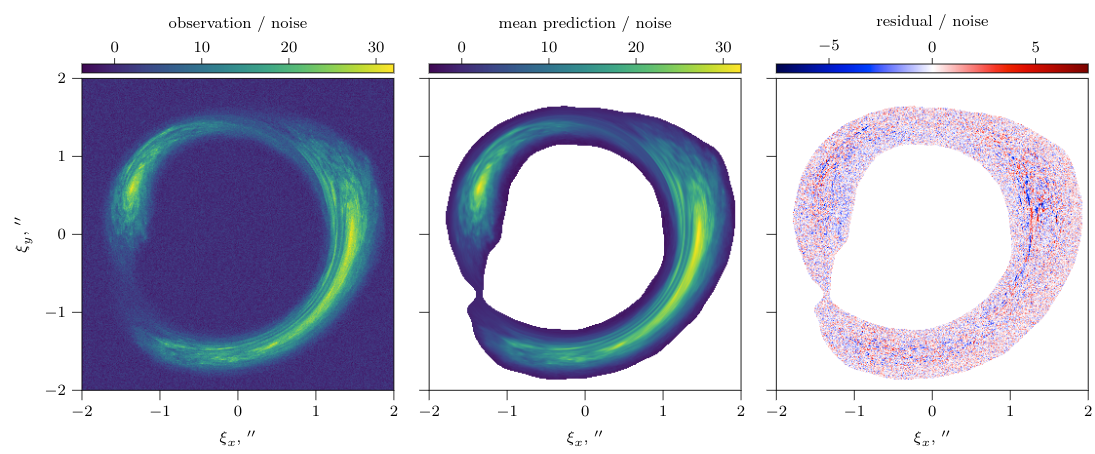

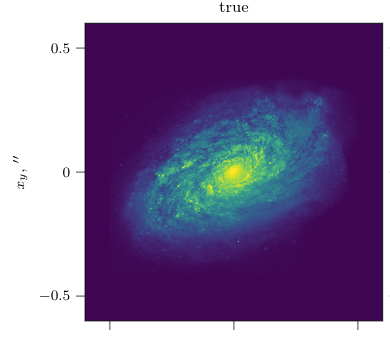

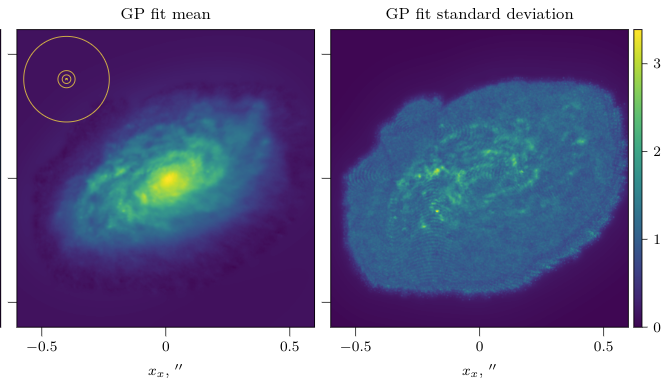

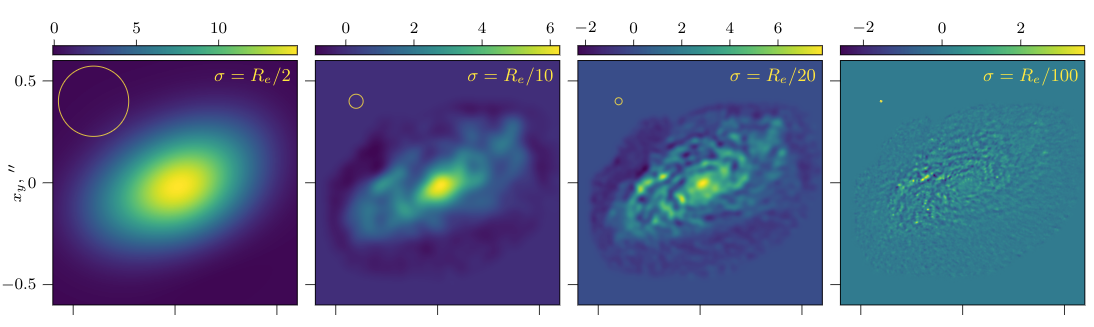

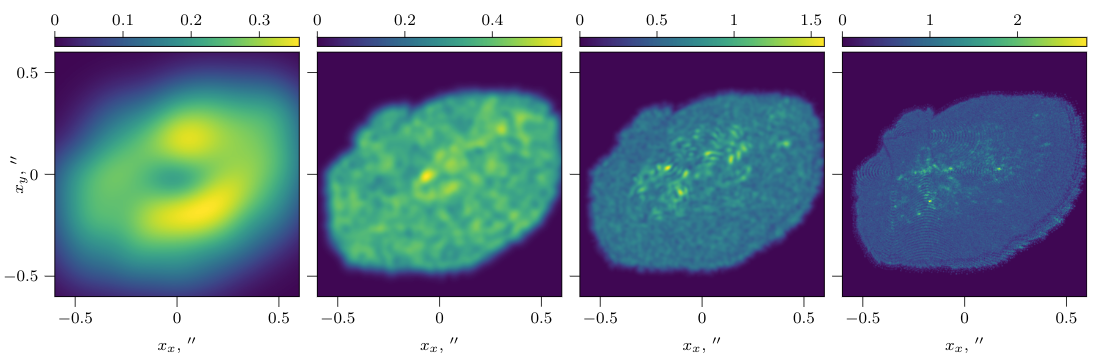

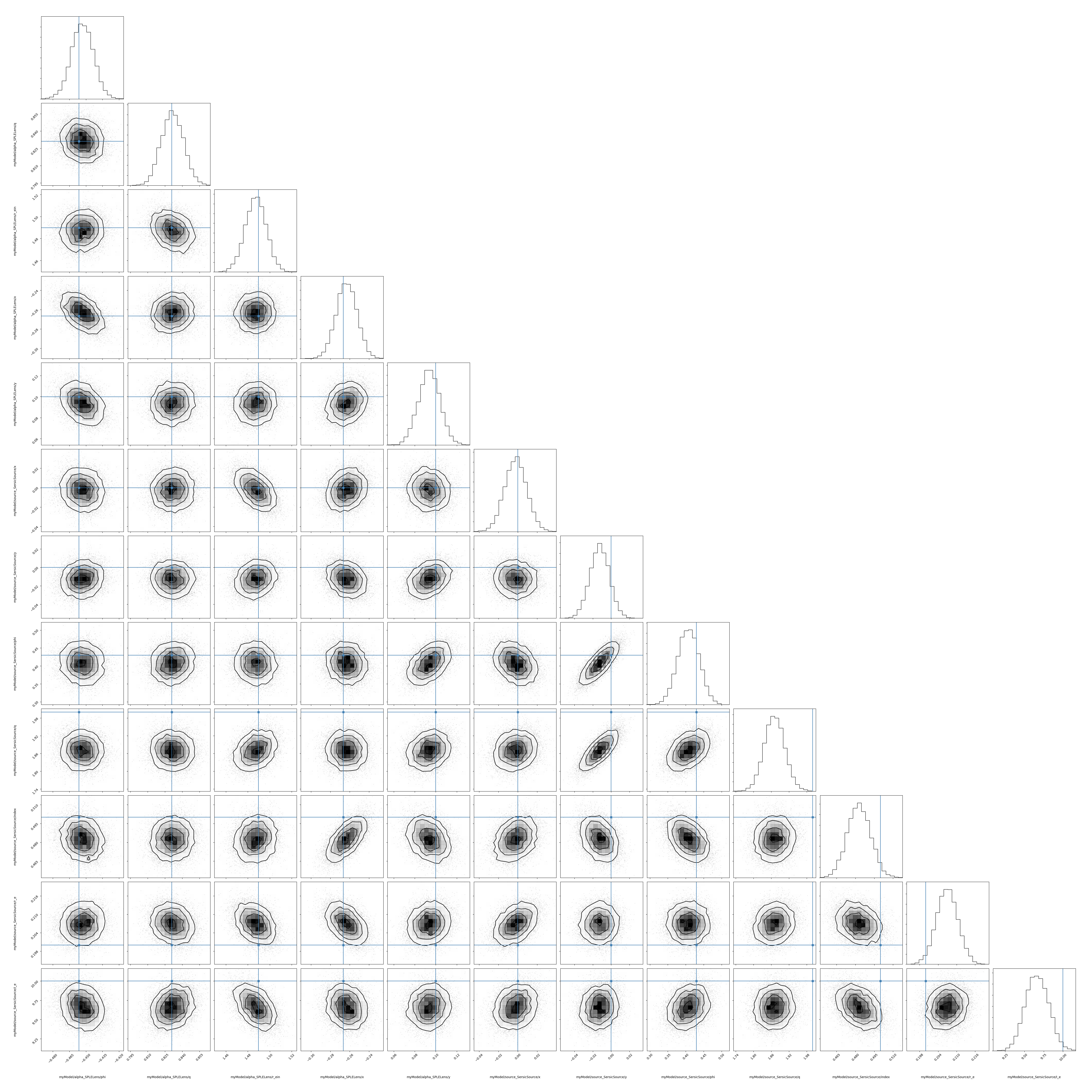

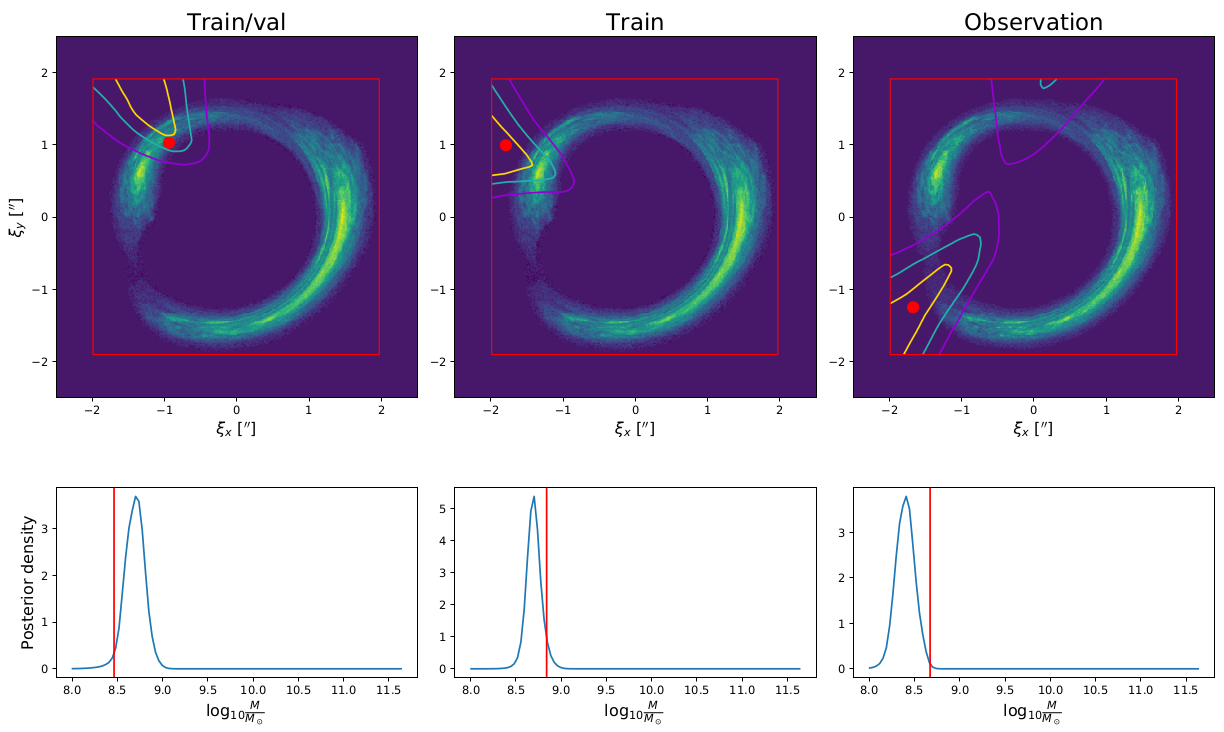

class: title-slide # Precision analysis of gravitational strong lensing images with nested likelihood-free inference .center[Ongoing work with: Marco Chianese (GRAPPA), Adam Coogan (GRAPPA), Kosio Karchev (SISSA/GRAPPA), Ben Miller (AI4Science/GRAPPA) ] .center[Christoph Weniger (GRAPPA/IoP/UvA)] .right[29 September 2020 AI4Science Colloquium] <br> <br> <br> <!-- Physics motivation strong lensing --> --- class: black-slide, center # The energy budget of the Universe .center[.width-70[]] ## What is Dark Matter made of? --- class: center # Dark matter candidates .center[.width-65[]] .footnote[Image credit: Bertone/Tait 2018] --- class: black-slide, center, bottom background-image: url(figures/illustris_box_z0124_4panel.png) background-size: contain ## Evolution of structure in the Universe (bottom to top) Dark matter overdensities collapse into increasily large halos. Large enough halos are sites of star and galaxy formation. ## Details depend on the particle nature of dark matter! .footnote[Source: The Illustris project, [illustris-project.org](https://illustris-project.org)] --- class: center, black-slide # Dark matter mass vs substructure .center[.width-65[]] Hot (left), warm (middle), and cold (right) dark matter at early times (top) and and late times (bottom) .footnote[Image credit: ITC @ University of Zurich] --- # Dark matter mass vs substructure Amount of substructure depends on the dark matter particle mass. .center[.width-65[]] .red.center[Absence of substructure implies that the Cold Dark Matter paradigm is wrong.] .footnote[Image credit: Chianese, Marco. 2019. “Differentiable Probabilistic Programming for Strong Gravitational Lensing.” arXiv [astro-ph.CO]. arXiv. https://doi.org/10.22323/1.358.0515] --- class: black-slide, center background-image: url(figures/A_Horseshoe_Einstein_Ring_from_Hubble.jpeg) background-size: cover # Strong gravitational lensing ## A "horseshoe Einstein Ring from Hubble" <br> <br> <br> <br> <br> <br> <br> <br> <br> <br> <br> <br> <br> <br> The gravity of a luminous red galaxy has gravitationally distorted the light from a much more distant blue galaxy. .footnote[Obsevation with Hubble Space Telescope's Wide Field Camera 3.] --- class: black-slide # Strong lensing .grid[ .kol-2-3[ .center[.width-100[]] ] .kol-1-4[ Lensed quasar .width-100[] Lensed galaxy .width-100[] ] ] --- # Weak and strong lensing .width-100[] .footnote[Slide credit: Siddharth Mishra-Sharma (NYU)] --- # The effect of dark matter substructure .width-100[] .footnote[Slide credit: Siddharth Mishra-Sharma (NYU)] --- # Signature of interest is .underline[very small] ## For mock images with different subhalos .center[.width-100[]] .center[Contour lines show reconstruction with neural network (more below)] .red.center[Images look virtually identical without stats analysis] .footnote[Preliminary, Coogan+ 2020 in preparation] --- class: black-slide # Variance between images is .underline[very large] .center.width-80[] --- class: black-slide, center, middle # Can Machine Learning techniques be used to perform precision analysis of these images? --- # Likelihood vs generative models ## We model the world from first principles - Data analyses usually starts with **forward models**, i.e. we an write down $P(x|z)$ from first principles (cp. with cancer images) - In many cases, we have some idea about reasonable **priors** $P(z)$. - This defines **generative model**: $$x, z \sim P(x, z) \equiv P(x|z)P(z)$$ ## Not only lensing, also... .grid[ .kol-1-3[Neutron stars .width-100[]] .kol-1-3[Gravitational waves .width-100[]] .kol-1-3[Particle colliders .width-100[]] ] --- # The inverse problem Given some data $x$, what does this tell me about $z$? .grid[ .kol-2-5[ **Bayes' theorem** $$ P(z|x) = \frac{P(x|z) P(z)}{P(x)} $$ ] .kol-3-5[ - Likelihood: $P(x|z)$ - Prior: $P(z)$ - Posterior: $P(z|x)$ - Evidence: $P(x) \equiv \int dz\, P(x|z) P(z)$ - (**Generative model**: $P(x, z) \equiv P(x|z)P(z)$) ] ] -- count: false This gives the **joined posterior** for the full latent space $z\in \mathbb{R}^n$. Almost always, we want however the **marginal posterior**, say, for $\theta\in \mathbb{R}$, where $z\equiv(\theta, \eta)$ and $\eta \in \mathbb{R}^{n-1}$ are nuisance parameters. $$ P(\theta|x) = \int d\eta\, P(\theta, \eta|x) $$ Alas, this integral is usually **intractable**. --- # Solution: Monte Carlo Integration etc! Likelihood ratios $P(x|\theta)/P(x|\theta')$ are central input for most sampling algorithms .grid[ .kol-1-2[ - Markov Chain Monte Carlo - Gibbs sampling - Nested sampling - Hamiltonian Monte Carlo (also needs gradients) - ... ## Results - samples from **joint posterior**, $z \sim P(z|x)$ (can be trivially marginalized) - maybe value of marginal likelihood $P(x)$ (in the case of nested sampling) ] .kol-1-2[ Typical example: .right[.width-90[]] ] ] --- # The traditional analysis .center.width-80[] ## Challenges - Hyper-parameter optimization requires performing above analysis on a grid, to optimize Bayesian evidence - No source uncertainties can be reconstructed - Very hard to incorporate additional components in the lens model (multiple subhalos), since posteriors become highly multi-modal .footnote[E.g. Vegetti+ 2009, 2014] --- # First approaches using neural networks Example: Brehmer+ 2019. The authors train classification networks to estimate posteriors for subhalo parameters. .center.width-80[] Very promising in context of this toy scenario .red.center[How can this method be successfully applied to real complex images?] .footnote[From Brehmer, Johann, Siddharth Mishra-Sharma, Joeri Hermans, Gilles Louppe, and Kyle Cranmer. 2019. “Mining for Dark Matter Substructure: Inferring Subhalo Population Properties from Strong Lenses with Machine Learning.” arXiv [astro-ph.CO]. arXiv. http://arxiv.org/abs/1909.02005] --- # Here: targeted inference networks <br> <br> .center[Instead of training a single all-purpose inference network, we build an analysis pipeline where .red[inference networks are trained individually for each image]] -- count: false $$\Rightarrow$$ .large.center.red[Trading analysis time for precision] -- count: false <br> ## Challenges - How to generate training data for targeted training? - How to make the pipeline sufficiently fast to be applicable to O(100) or more images? --- # Our strategy ## Step 1: Generate image-specific training data with variational methods - Perform high-dimensional fits - Component separation - Hyper-parameter optimization - Can be used to generate training data ## Step 2: Train inference network to anlayse dark matter structures - Posterior estimation with targeted neural ratio estimation --- # Step 1: Variational inference ## The goal: Interverting the lensing effect and recovering the original source image .center[.width-40[] .width-40[]] .center[How to get from the image (left) to the reconstructed source (right)?] .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # The math of strong lensing .center[ .width-80[] ] - We have to reconstruct the original source galaxy, $I_{src}(\Omega)$ (right) - Sources are complex, tenthousand of parameters. - We have to reconstruct the mass distribution in the lens plane, $\Sigma_{lens}(\Omega)$ (middle) - We keep the mass distribution simple (analytic model with about 10 parameters). .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Modeling the source as Gaussian process .center[.width-65[]] - Hyper-parameter optimization through maximization of marginal likelihood - Kernel is simple in source plane - But: Lensing causes complicated kernal structure in image plane $\Rightarrow$ **existing approaches/libraries were insufficient for our use-case** .footnote[Image source: https://blog.dominodatalab.com/wp-content/uploads/2017/03/output_75_0-1.png] --- # Factorized Gaussian process ## Covariance of image pixels from indicator functions and GP kernel $$ K_{ij} = k(\mathbf{\vec{p}}_i - \mathbf{\vec{p}}_j) = \int \text{d}\vec{x}_1 \text{d}\vec{x}_2 \ f_i(\mathbf{\vec{p}}_i - \vec{x}_1) \ k_0(\vec{x}_1 - \vec{x}_2) \ f_j(\mathbf{\vec{p}}_j - \vec{x}_2) $$ .grid[ .kol-2-5[ Approximate factorization $$ K \approx T T^T $$ allows to write $$ \mu\_i = \sum\_j T_{ij} y_j $$ $$ y_j \sim \mathcal{N}(0, 1) $$ ] .kol-3-5[ .width-80[] ] ] .center[ This implies that $\text{Cov}(\mu\_i, \mu\_j) = K\_{ij}$ in the image plane. ] --- # Factorization of covariance matrix .center[] The intergral $$ K_{ij} = k(\mathbf{\vec{p}}_i - \mathbf{\vec{p}}_j) = \int \text{d}\vec{x}_1 \text{d}\vec{x}_2 \ f_i(\mathbf{\vec{p}}_i - \vec{x}_1) \ k_0(\vec{x}_1 - \vec{x}_2) \ f_j(\mathbf{\vec{p}}_j - \vec{x}_2) $$ becomes easy to evaluate if we approximate pixel indicator functions with multivariate Gaussians. - .red[Integral can be expressed in terms of multivariate Gaussian]. - By replacing $k_0$ with $\sqrt{k_0}\sqrt{k_0}$, we can factorize $K$. .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Exact vs approx. correlation coefficients .center.width-60[] .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Reverse KL divergence Goal: Find $q_\phi(z|x_0)$ for some observation $x_0$ by minimize the KL divergence at $x=x_0$ One can show that minimizing KL divergence is equivalent to maximizing **Evidence Lower Bound (ELBO)** $$ \text{ELBO} \equiv \underbrace{\mathbb{E}\_{z \sim q\_\phi(z|x\_0)} \left[\ln p\_\theta(x\_0,z)\right]}\_{\rm "Negative\; energy"} - \underbrace{\mathbb{E}\_{z \sim q\_\phi(z|x\_0)} \left[\ln q\_\phi(z|x\_0)\right]}\_{\rm Entropy} $$ ELBO maximization w.r.t. model parameters $\theta$ and variational parameters $\phi$ leads to - Variational posterior $q\_\phi(z|x_0)$ for data $x_0$ - Large model evidence $p\_\theta(x_0)$ .footnote[See e.g. Hoffman et al. 2016, “ELBO Surgery: Yet Another Way to Carve up the Variational Evidence Lower Bound.” http://approximateinference.org/accepted/HoffmanJohnson2016.pdf] --- # Exact vs approx. GP .grid[ .kol-1-2[ ## Properties of our approximate GP - No log-determinant evaluation (happens on-the-fly as part of ELBO maximization) - Can model complex kernels - Reasonable agreement with exact Gaussian process regression ] .kol-1-2[ .center.width-100[] ] ] .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Image reconstruction works well .center.width-100[] - We quickly can find lensing parameters and source parameters that reproduce the image - Hyper-parameter fits optimize variations in the source .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Source reconstruction .center[ .width-35[] .width-55[] ] - Besides good mean values, we obtain uncertainties .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Source layers differ in smoothness ## Mean values .center.width-80[] ## Uncertainties .center.width-80[] .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Full posteriors (with Sersic source) .center[.width-60[]] .footnote[Image credit: Preliminary, Karchev+ 2020 in preparation] --- # Towards targeted inference networks .center.red[Training data can now be generated by sampling from the posterior predictive distribution] .center.width-50[] .center[(exagerated illustration)] --- # Step 2) Marginal posterior estimation with inference networks .red.center[Our goal marginal posteriors about properties of substructure in the images, like:] .center[.width-100[]] .large.center[How to train inference network for $p(z|\vec x)$?] .footnote[Image credit: Preliminary, Coogan+ 2020 in preparation] --- # Neural likelihood-free inference .center.red[Our goal is to train an augmented CNN to estimate marginal posteriors for parameters of interest.] .bold[Starting point]: for any pair of observation $x$ and model parameter $\theta$, the goal is to estimate the probability that this pair belongs one of the following classes: .grid[ .kol-1-2[ $H_0$: Data $\vec x$ corresponds to model parameters $\vec z$ $H_1$: Data $\vec x$ and model $\vec z$ are unrelated ] .kol-1-2[ $(\vec x, \vec z) \sim P(\vec x, \vec z) = P(\vec x|\vec z)P(\vec z)$ $(\vec x, \vec z) \sim P(\vec x)P(\vec z)$ ] ] .footnote[See e.g. Louppe+Hermanns 2019 and references therein] --- # Joint vs marginal samples 1) Examples for $H_0$, jointly sampled from $\vec x, z \sim P(\vec x|z) P(z)$ .grid[ .kol-1-6[ .center[Cat] .width-100[] ] .kol-1-6[ .center[Donkey] .width-70[] ] .kol-1-6[ .center[Cat] .width-100[] ] .kol-1-6[ .center[Cat] .width-100[] ] .kol-1-6[ .center[Donkey] .width-100[] ] .kol-1-6[ .center[Donkey] .width-80[] ] ] 2) Examples for $H_1$, marginally sampled from $\vec x, z \sim P(\vec x) P(z)$ .grid[ .kol-1-6[ .center[Donkey] .width-100[] ] .kol-1-6[ .center[Cat] .width-100[] ] .kol-1-6[ .center[Cat] .width-100[] ] .kol-1-6[ .center[Cat] .width-70[] ] .kol-1-6[ .center[Donkey] .width-100[] ] .kol-1-6[ .center[Donkey] .width-80[] ] ] .center[ Data: $\vec x = \text{Image}$; Label: $z \in \{\text{Cat}, \text{Donkey}\}$] .footnote[See Louppe+Hermanns 2019] --- # Loss function .bold[Strategy:] We train a neural network $d_\phi(\vec x, z) \in [0, 1]$ as binary classifier to estimate the probability of hypothesis $H_0$ or $H_1$. The network output can be interpreted, for a given input pair $\vec x$ and $z$, as probability that $H_0$ is true. - H0 is true: $d_\phi(\vec x, z) \simeq 1$ - H1 is true: $d_\phi(\vec x, z) \simeq 0$ The corresponding loss function is (so-called "binary cross-entroy") $$ L\left[d(\vec x, z)\right] = -\int dx dz \left[ p(\vec x, z) \ln\left(d(\vec x, z)\right) + p(\vec x)p(z) \ln\left(1-d(\vec x, z)\right) \right] $$ -- count: false Minimizing that function (see next slide) w.r.t. the network parameters $\phi$ yields $$ d(\vec x, z) \approx \frac{p(\vec x, z)}{p(\vec x, z) + p(\vec x)p(z)} $$ .footnote[See Louppe+Hermanns 2019] --- # Properties of optimized network ## Some analytical estimates $$ \frac{\partial}{\partial\phi}L \left[d_\phi(\vec x, z)\right] = \int dx dz \left[ p(\vec x, z) \ln\left(d(\vec x, z)\right) + p(\vec x)p(z) \ln\left(1-d(\vec x, z) \right) \right] $$ $$ = \int dx dz \left[ \frac{p(\vec x, z)}{d(\vec x, z)} + \frac{p(\vec x)p(z)}{1-d(\vec x, z) } \right] \frac{\partial d(\vec x, z)}{\partial \phi} $$ Setting the part in square brackets to zero yields that the network is optimized once $$ d(\vec x, z) \approx \frac{p(\vec x, z)}{p(\vec x, z) + p(\vec x)p(z)} $$ --- # Likelihood-to-evidence ratio .red.center[Training binary classification networks yield true Bayesian posterior estimates!] With a bit of math one can show that $$ r(\vec x, z) \equiv \frac{1}{d(\vec x, z)}-1 \simeq \frac{p(\vec x|z)}{p(\vec x)} = \frac{p(z|\vec x)}{p(z)} $$ Once we have trained the network $d_\phi(\vec x, z)$, we can **estimate the posterior** $$ p(z|\vec x) \simeq r(\vec x, z) p(z) $$ --- exclude: true # Approach: Likelihood-to-evidence ratio Assume that the goal is to distriminate parameters $z$ matching an observation $x$ from parameters that do not match an observation. We want to distriminate - $H_0$: $x, z \sim p(x, z)$ - $H_1$: $x, z \sim p(x)p(z)$ We train a binary classifier to discriminate between $(x, z)$ pairs drawn from the joint distribution $p(x, z)$ and the marginal distributions $p(x)p(z)$. This loss function is given by $$\ell = \int dx dz \left( p(x, z)\ln d(x, z) +p(x)p(z)\ln [1-d(x, z)] \right)$$ One can then show that the trained neural network $d(x, z) \in [0, 1]$ satisfies $$r(x,z)\equiv\frac{d(x, z)}{1-d(x, z)} = \frac{p(x|z)}{p(x)}$$ which is the likelihood-to-evidence ratio. Training only requires simulation/samples, and side-steps any likelihood marginalization. .footnote[e.g. Hermans, Louppe, 2019] --- # The inference network .center.red[We use a very simple network structure (low capacity, fast to train).] ## Three conv layers .center[$\vec x = \text{conv2d}(\vec x)$] .center[$\vec x = \text{conv2d}(\vec x)$] .center[$\vec f = \text{conv2d}(\vec x)$] ## Concatenating features from CNN, $\vec f$ with model parameters $z$ .center[$\vec f' = \text{concat}(\vec f, z)$] ## Three dense layers .center[$r(x, z) = \text{MLP}(\vec f')$] --- class: center, middle We used variational inference fit from above to generate training data for a neural network that can recognize individual subhalos. --- # Strong lensing image analysis .center.width-80[] The shown posteriors are effectively marginalized over thousands of source and lens parameters. Those marginal posteriors can be evaluated in seconds once the network is trained (and training takes maybe 20 min). .footnote[Preliminary, Coogan+ 2020 in preparation] --- class: center, middle, black-slide background-image: url(figures/A_Horseshoe_Einstein_Ring_from_Hubble.jpeg) <br><br><br><br><br><br><br><br><br><br><br> .center.large[It works: Similar sensitivity to full likelihood analysis, but much much more flexible.] --- class: center # Next steps <br> <br> Fully atomatize analysis pipeline -- count: false Inference of large number of subhalos -- count: false Inference of cutoff of small-scale structure -- count: false Application to real images (not just mock images) -- count: false Extension to radio data? --- exclude: true # Spinoff I: Nested posterior estimation The idea of targeted inference networks can be generalized. --- exclude: true # Spinoff II: Analysis of $\gamma$-ray data --- # Conclusions - The analysis of .red[gravitationally strongly lensed galaxy images] enables to study the substructure of dark matter. - Extracting information from these images requires .red[sub-percent accuracy] of the analysis. - We developed a .underline.red[two-step analysis pipeline]. - Step 1) Source and main lens reconstruction with .red[variational inference], including uncertainties. This enables the generation of .red[targetd training data]. - Step 2) Training of an .red[targeted inference network] to perform substructure analysis. - The approach enables to study the combined effect of thousands of subhalos, which is .red[intractable with traditional techniques]. - Lots of .red[spin-off work] (dark matter searches with gamma-ray data, gravitational wave parameter inference, ...) -- count: false .large.center[Thank you!] <!-- *********************** End of slide content ********************** -->